The ExACT Tools for Safe Autonomy

https://youtu.be/bE2UpKHxsLg

DARPA’s Assured Autonomy Program Greenlights HRL Laboratories Algorithmic Tool Kit

HRL Laboratories, LLC, will join the Defense Advanced Research Project Agency (DARPA) in its Assured Autonomy (AA) program with the Expressive Assurance Case Toolkit (ExACT) project, a set of mathematical tools that will enable verification that any autonomous vehicle’s guidance algorithms are correct and safe.

“The techniques we’re using are about mathematically verifying that the algorithms on autonomous systems lead to safe and reliable system behavior.”

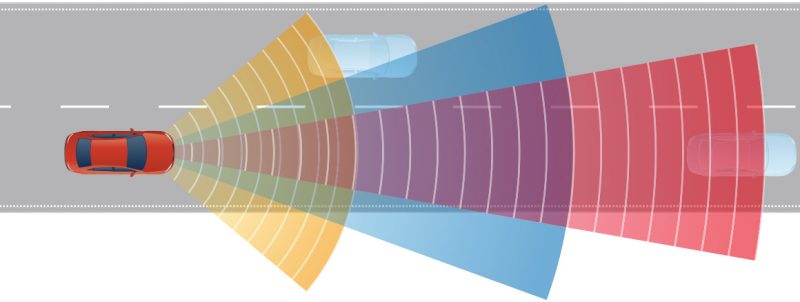

Self-driving cars are a reality, although fully autonomous vehicles are still in their infancy. Developing safe and reliable autonomous vehicle technology beyond the testing stages and into the marketplace is potentially world changing, but only if the vehicles work as they are supposed to. Ensuring that autonomous vehicle systems perform as programmed without any unsafe behavior is the basis of DARPA’s AA program.

“The techniques we’re using are about mathematically verifying that the algorithms on autonomous systems lead to safe and reliable system behavior,” said Michael Warren, HRL’s principal investigator for ExACT and co-project manager. Joining Warren as project manager and co-principal investigator is Aleksey Nogin.

“This is also taking into account the physics of the system. So if the system is for driving a car, we’re asking ‘what are the dynamics of the car, how does it behave on the road based on surface conditions, and how does that interact with the driving algorithms?’ We’re determining how to verify mathematically that if the car is following its algorithms and dynamics model it will operate safely,” Warren said.

“Verification of vehicle autonomy has different stages, and the ExACT program is divided according to those stages. One is static verification, which comprises descriptions of the algorithm or code and its safety specifications, such as not skidding off the road or not rear-ending a forward car. Then comes the physics model of the vehicle, for which we build a mathematical proof that if the autonomous system follows this algorithm, the system cannot violate the safety specifications,” Warren said.

Truly autonomous vehicles will require mathematical assurance that their systems perform as programmed without any unsafe behavior. © 2018 HRL Laboratories.

Runtime monitoring is the stage that ensures safety specifications are not violated while the system is actually running, which is crucial for autonomous systems that might still be learning at runtime. When proving many safety properties, hypotheses (e.g., about road conditions) are needed. These might include such elements as friction coefficient, tire condition, or tire responsiveness. As long as those hypotheses are valid, system behavior is mathematically guaranteed, and runtime monitoring is responsible in part for tracking validity of such hypotheses.

“We don’t know which platforms DARPA will select for testing and evaluation,” Warren said. “ExACT is not specific at all and can be applied to any autonomous platform.”

HRL Laboratories, LLC, California (hrl.com) pioneers the next frontiers of physical and information science. Delivering transformative technologies in automotive,aerospace and defense, HRL advances the critical missions of its customers. As a private company owned jointly by Boeing and GM, HRL is a source of innovations that advance the state of the art in profound and far-reaching ways.

Media Inquiries: media[at]hrl.com, (310) 317-5000